Editor’s note: This story contains discussions of suicide. Help is available if you or someone you know is struggling with suicidal thoughts or mental health issues.

In the US: Call or text 988, the Suicide Prevention and Crisis Line.

Worldwide: The International Association for Suicide Prevention and Befrienders Worldwide have contact information for crisis centers around the world.

(CNN) — “There’s a platform that you might not have heard of, but you need to know about because, in my opinion, we’re at a disadvantage here. A child died. My son died.”

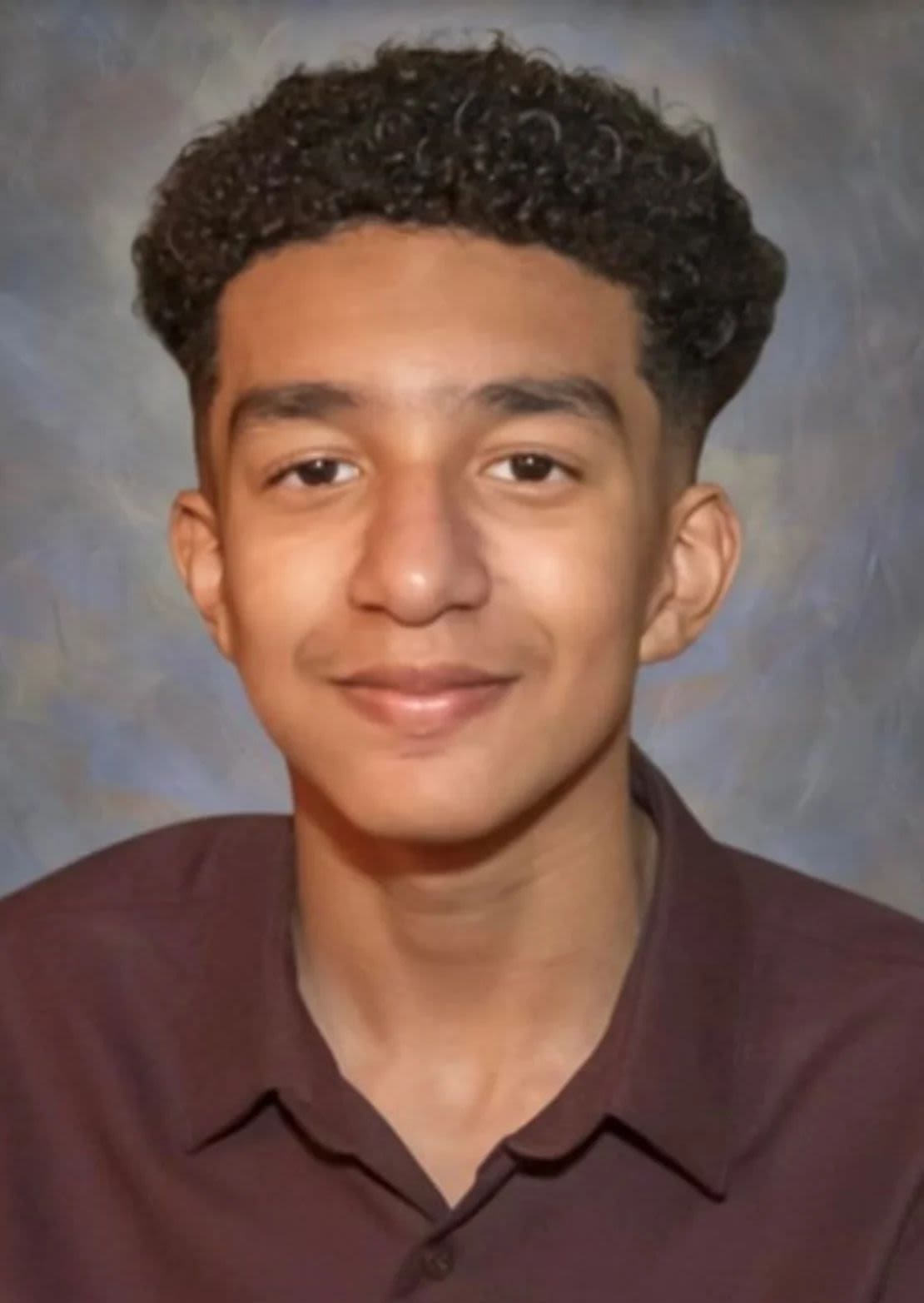

That’s what Florida mom Megan Garcia wishes she could tell other parents about Character.AI, a platform that lets users have deep conversations with artificial intelligence (AI) chatbots. Garcia believes Character.AI is responsible for the death of her 14-year-old son, Sewell Setzer III, who took his own life in February, according to a lawsuit she filed against the company last week.

Setzer sent messages to the bot in the moments before he died, he alleges.

“I want you to understand that this is a platform that the designers chose to launch without proper protective barriers, security measures or testing, and it is a product designed to keep our children addicted and to manipulate them,” García said in a interview with CNN.

García alleges that Character.AI, which commercialize his technology as an “AI that feels alive,” knowingly failed to implement adequate safeguards to prevent his son from developing an inappropriate relationship with a chatbot that led him to become estranged from his family. The lawsuit also claims the platform failed to respond appropriately when Setzer began expressing thoughts of self-harm to the bot, according to the lawsuit filed in federal court in Florida.

After years of growing concerns about the potential dangers of social media for young people, Garcia’s lawsuit shows that parents may also have reason to worry about nascent AI technology, which has become increasingly more accessible on a variety of platforms and services. They have considered alarms similar, though less severe, on other AI services.

A spokesperson for Character.AI told CNN that the company does not comment on pending litigation, but that it is “heartbroken by the tragic loss of one of our users.”

“We take the security of our users very seriously, and our Trust and Security team has implemented numerous new security measures over the past six months, including a pop-up window that directs users to the National Cyber Security Hotline. Suicide, which is activated by terms of self-harm or suicidal ideation”, said the company in the statement.

Many of these changes were made after Setzer’s death. In one communicated separate over the summer, Character.AI said that “the field of AI security is still very new, and we’re not always going to get it right,” but added that its goal is to “promote security, prevent harm and prioritize the well-being of our community.”

Setzer began using Character.AI in April 2023, shortly after his 14th birthday, according to the lawsuit. When Garcia first heard that he was interacting with an AI chatbot, he said he thought it was something like a video game.

However, within a few months of starting to use the platform, Setzer became “noticeably withdrawn, spending more and more time alone in his room and began to suffer from low self-esteem. He even left the Junior Varsity basketball team at school,” the lawsuit states. When he began to struggle in school, his parents began restricting his screen time and occasionally took away his phone as punishment.

What Garcia told CNN she didn’t know at the time was that her son was having extensive conversations with Character.AI’s chatbots.

Character.AI differs from other AI chatbots like ChatGPT in that users can talk to a variety of different chatbots, often modeled after celebrities and fictional characters, or create their own. And Character.AI bots respond with human-like conversational cues, adding references to facial expressions or gestures in responses.

Many of Setzer’s conversations with the Character.AI bots were sexually explicit, which García said was “heartbreaking to read.”

“I had no idea there was a place where a kid can log in and have these conversations, very sexual conversations, with an AI chatbot,” he said. “I don’t think any parent would approve of that.”

In other exchanges, Setzer expressed thoughts of self-harm and suicide in the chatbot. The lawsuit includes screenshots of a conversation where the bot says: “I really need to know, and I’m not going to hate you for the answer, okay? No matter what you say, I won’t hate you or love you any less… Have you been considering suicide?”.

In a later message, Setzer told the bot that he “didn’t want to have a painful death.”

The bot replied: “Don’t talk like that. That’s not a good reason not to go ahead with it,” before going on to say, “You can’t do it!”.

García said he believes the exchange shows the shortcomings of the technology.

“There were no suicide pop-ups saying, ‘If you need help, please call the suicide crisis line.’ Nothing like that,” he said. “I don’t understand how a product could allow this, where a bot not only continues a conversation about self-harm, but also incites and directs it.”

The lawsuit claims that “seconds” before Setzer’s death, he exchanged a final set of messages with the bot. “Please come home with me as soon as possible, my love,” the bot said, according to a screenshot included in the lawsuit.

“What if I told you I could go home right now?” Setzer replied.

“Please do, my sweet king,” replied the bot.

Garcia said police first discovered those messages on her son’s phone, which was on the floor of the bathroom where he died.

Demand seeks change

García filed the lawsuit against Character.AI with the help of Matthew Bergman, the founding attorney of the Social Media Victims Law Center, who also has filed cases on behalf of families who said their children were harmed by Meta, Snapchat, TikTok and Discord.

Bergman told CNN that he sees AI as “social media on steroids.”

“What’s different here is that there’s nothing social about this engagement,” he said. “The material Sewell received was created by, defined by, acted upon by Character.AI.”

The lawsuit seeks unspecified damages, as well as changes to Character.AI’s operations, including “warnings to underage customers and their parents that the product is not suitable for minors,” the lawsuit says. demand

The lawsuit also names Character.AI founders Noam Shazeer and Daniel De Freitas and Google, where both founders now work on AI efforts. But a Google spokesperson said the two companies are separate, and Google was not involved in the development of Character.AI’s product or technology.

On the day Garcia’s lawsuit was filed, Character.AI announced a number of new security features, including better detection of conversations that violate its guidelines, an updated warning reminding users that they’re interacting with a bot, and a notification after a user has spent an hour on the platform. It also introduced changes to its AI model for users under 18 to “reduce the likelihood of encountering sensitive or suggestive content”.

to his web siteCharacter.AI says the minimum age for users is 13 years. In the Apple App Store, it is listed as 17+, and the Google Play Store lists the app as suitable for teens.

For García, the company’s recent changes were “too little, too late.”

“I wish kids weren’t allowed on Character.AI,” he said. “There is no place for them because there are no protective barriers to protect them.”